The Art of Primary Research: How the Best Deal Teams Build Conviction

Nearly 60% of deals fail due to poor diligence. Here's how top deal teams use primary research to validate theses and uncover risks before they become write-downs.

✦

Almost 60% of deals fail because due diligence missed critical issues. That statistic from Bain & Company should unsettle anyone deploying capital, yet the response from most investment teams has been to gather more data rather than better data. Secondary research tells you what the market already knows. Analyst reports aggregate consensus. Management presentations polish reality into something more palatable. Somewhere between the deck and the truth lies a gap, and in that gap, fortunes are made and lost. Investment teams burn weeks on logistics, pay research prices for what amounts to access, and emerge with colour rather than conviction. The firms that consistently outperform have learned that bridging this gap requires better questions asked of the right people, not more spreadsheets. This is an inside look at the methodologies top-tier deal teams use to gather original intelligence, validate investment theses, and uncover hidden risks before they become write-downs.

The ground has shifted

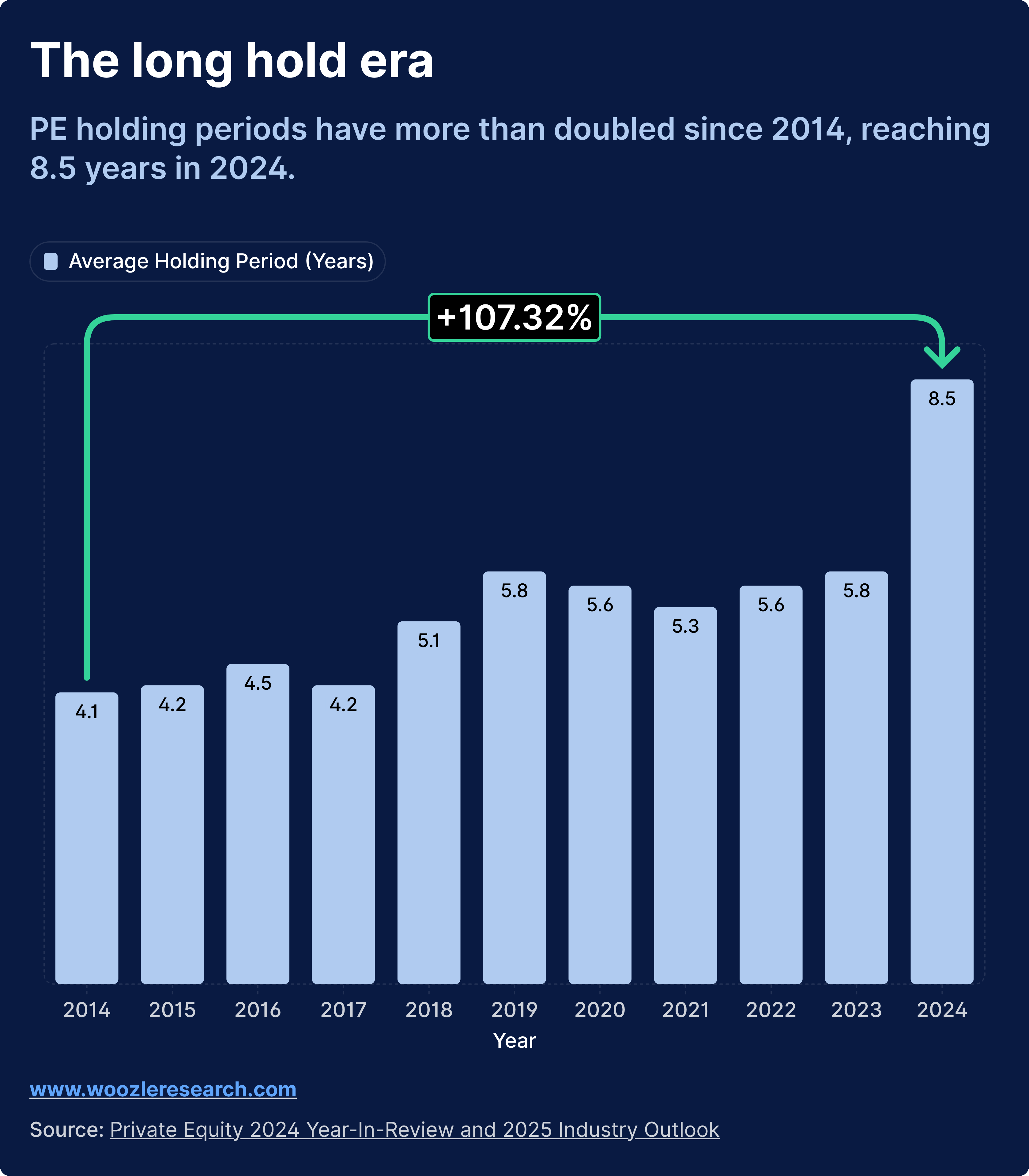

A decade ago, private equity holding periods averaged around four years. Investors could underwrite to a quick flip, accept some diligence shortcuts, and exit before structural problems surfaced. That world no longer exists.

Private equity holding periods hit 8.5 years in 2024, more than double the 4.1 years observed in 2007. When you hold assets twice as long as historical norms, getting the initial diligence right becomes critical. Less room for error exists when conviction, sizing, and timing decisions must hold up for nearly a decade.

The nature of dealmaking has shifted as well. Add-on deals now represent 74% of all PE transactions, up from 59% in 2014. Investment teams are running repeated primary research projects for bolt-on acquisitions throughout the holding period, not conducting diligence once at platform acquisition. The cumulative analyst time and research spend adds up fast.

Meanwhile, global PE dealmaking rose 14% to $2 trillion in 2024, making it the third-most-active year on record. Buyout investment value increased 37% year over year to $602 billion, excluding add-ons. As deal flow increases, the temptation to rush diligence grows. But longer holding periods and more buy-on activity has made commercial due diligence more complex and in turn investment-grade primary research is more important than ever.

The firms that have adapted to this environment share a common trait. They treat primary research as a core competency, not a vendor relationship. They have systematised how they gather intelligence, verify claims, and synthesise findings into conclusions that can survive scrutiny in an investment committee.

What follows is how they do it.

Private equity holding periods have more than doubled since 2007, fundamentally changing the diligence equation.

Before you pick up the phone

Primary research begins before you talk to anyone. You need a clear hypothesis and specific questions that, when answered, will move your conviction on the deal.

The best deal teams call these Key Intelligence Questions. KIQs are the three to five critical questions that your investment decision depends on. They should be specific enough that a clear answer changes your view on valuation, risk, or deal structure.

The difference between a weak KIQ and a strong one is the difference between wasted calls and investment-grade intelligence. A weak KIQ asks whether the market is growing. Everyone already knows the answer, and even if they do not, the answer rarely changes a deal. A strong KIQ asks whether the target's top 10 customers will increase spending by 15% or more over the next 18 months, and what would cause them to switch providers. That question, answered with evidence, directly impacts how you underwrite revenue.

Your KIQs should map directly to your investment thesis. If you are underwriting revenue growth, you need KIQs about customer retention and wallet share. If you are betting on margin expansion, you need KIQs about pricing power and cost structure. If you are concerned about competitive dynamics, you need KIQs about win rates, deal displacement, and technology substitution risk.

The discipline of writing tight KIQs forces clarity about what you actually need to learn. It also prevents the most common failure mode in primary research: conducting dozens of calls that generate interesting colour but never answer the question that matters.

Mapping the ecosystem

Once you have your questions, you need to identify who can answer them. The best deal teams think about this systematically, mapping concentric circles around the target asset.

The inner circle includes direct customers, suppliers, and employees. These are the people with firsthand experience of the target's products, operations, and culture. Customers tell you about satisfaction and switching risk. Suppliers tell you about payment practices and demand signals. Employees tell you about what actually happens inside the building, not what the management presentation claims.

The middle circle includes competitors, channel partners, and former employees. Competitors are often the most valuable sources in primary research because they have strong incentives to tell you the truth about market dynamics and the target's weaknesses. Channel partners see how products actually sell and where friction exists. Former employees, particularly those who left recently, can speak candidly about operational reality without current employment constraints.

The outer circle includes industry analysts, regulators, and adjacent market participants. These sources provide context and pattern recognition. They may not know the target intimately, but they understand the broader dynamics shaping the market.

You need voices from all three circles to triangulate truth. A customer tells you they love the product. A competitor tells you the customer is actively evaluating alternatives. A former employee tells you the product roadmap has stalled. Each perspective is incomplete. Together, they reveal reality.

The middleman problem

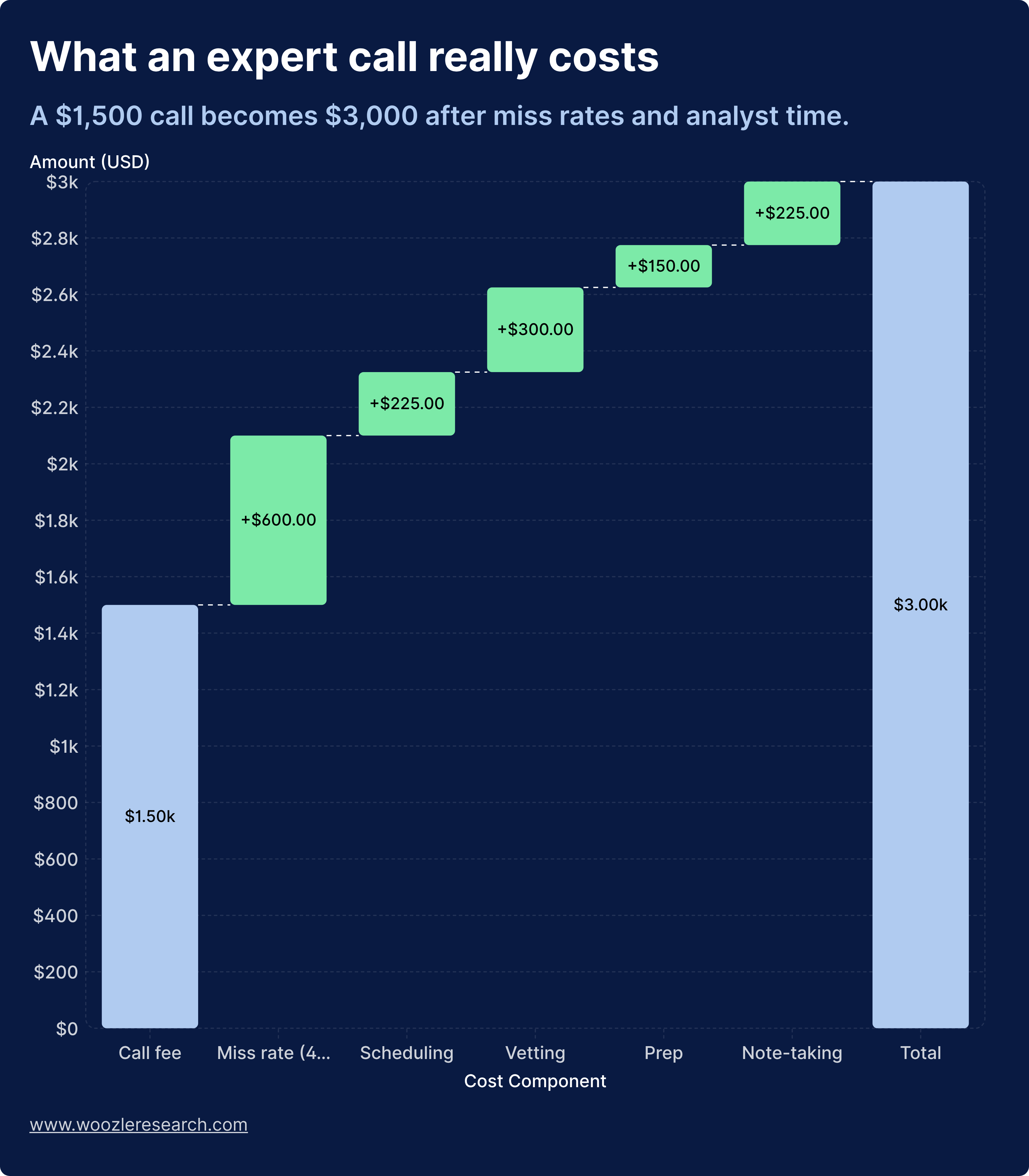

Finding the right voices often means working with expert networks. These platforms connect investors with industry professionals who can provide perspective on specific markets, companies, or trends. The industry has grown to $1.9 billion, with expert network companies charging rates that often start at $1,000 per hour. Elite experts command $1,000 to $5,000 or more per hour.

But the sticker price dramatically understates the true cost.

When you factor in the 40% miss rate on calls and the analyst time spent on logistics, vetting, and note-taking, the real cost per useful insight approaches $2,000. You are paying research prices for access, then doing all the actual research work yourself.

Expert networks do provide value. 81% of investment professionals believe that talking to experts is legitimate and value-adding. The question is how that value gets delivered.

When you use expert networks, verify that the expert profile matches your specific question, not just the industry. An expert who spent 10 years in enterprise software sales cannot automatically speak to a specific vertical SaaS company's ability to move upmarket. Verify that the expert has recent, direct experience within 12 to 18 months. Markets change quickly, and expertise degrades. Verify that the expert has not been recycled from a shared database your competitors already tapped. And verify that the pricing model aligns with outcomes, not just call volume.

The alternative is cold outreach. LinkedIn, industry conferences, and direct sourcing often yield better experts than networks. These experts have no middleman monetising their knowledge, which means they are more likely to give you unfiltered views. Response rates sit around 15 to 20% when your targeting is tight. That is enough to build a panel of 10 to 15 experts for most projects.

Your firm's portfolio company executives, limited partners, and advisory board members often know the best voices in any given market. A warm introduction from a trusted source dramatically improves both response rates and candor.

"The true cost of an expert call is more than double the sticker price if you factor in miss rates and analyst time spent on logistics"

The conversation that counts

How you structure and conduct interviews determines whether you get rehearsed talking points or investment-grade intelligence.

The best deal teams use what might be called the blind interview technique. They never disclose the target company name upfront unless absolutely necessary. Instead, they describe the business model, size, and market position in neutral terms. You might say you are researching a $50 million revenue SaaS company in the HR tech space that serves mid-market customers and has been growing 30% annually. This approach eliminates bias and gets you the expert's genuine view of the market dynamics rather than their opinion of one specific player. You can reveal the target later in the conversation once you have established baseline market views.

The interview itself should follow a funnel structure. The opening takes five minutes. Establish the expert's background, role, and direct experience with the relevant market or customer segment. You are verifying that the expert can actually answer your questions before you invest time in the conversation.

Market context takes 10 minutes. Ask broad questions about market trends, competitive dynamics, and customer behaviour patterns. This establishes the expert's frame of reference and often surfaces unexpected insights about industry structure.

Thesis testing takes 20 minutes. This is the core of the interview. Ask specific questions tied to your KIQs. This is where you validate or invalidate your investment assumptions. Push for specificity. Ask for examples. Request names, numbers, and timelines.

Probing takes 10 minutes. Follow unexpected answers, ask for specific examples, and push on vague claims. The best insights often emerge when you probe something the expert mentioned in passing.

The closing takes five minutes. Ask what question you should have asked but did not. Ask for referrals to other experts. The expert often knows better than you who else can illuminate the topic.

The funnel structure of an expert interview: opening (5 min), market context (10 min), thesis testing (20 min), probing (10 min), closing (5 min).

Question design matters enormously. A weak question asks whether customer satisfaction is high. A strong question asks the expert to walk you through the last time they evaluated whether to renew or switch providers, and what factors drove that decision. A weak question asks whether the company is gaining market share. A strong question asks who won the deals they lost in the past year, and why.

Record interviews when legally permissible and with explicit consent. Transcripts catch details you miss in real-time and create an audit trail for compliance. Your notes should capture direct quotes that support or contradict your thesis, specific data points like customer counts, pricing, and churn rates, unprompted concerns or red flags, and referrals to other experts or data sources. Tag each insight with the expert's profile and confidence level. You will need this for triangulation.

"The best questions make the expert tell a story with names, numbers, and timelines. Stories are harder to fake than opinions."

From fragments to conviction

Raw interview notes do not move conviction. You need to synthesise findings into clear, defensible conclusions.

Quantitative analysis helps where possible. Net Promoter Score measures customer loyalty through a simple question: on a scale of 0 to 10, how likely are you to recommend this product or service? Calculate NPS by subtracting the percentage of detractors from the percentage of promoters. An NPS above 50 indicates strong customer loyalty. Below 0 signals serious retention risk. This metric gives you a quantifiable read on soft factors like brand strength and customer satisfaction.

Qualitative synthesis requires more structure. Build a matrix that maps themes across experts. For each theme, document the supporting evidence, the contradicting evidence, and your confidence level. On pricing power, you might find that six of eight customers said they would accept a 10% price increase, while two of eight said they are actively evaluating cheaper alternatives. That gives you medium-high confidence. This format forces you to acknowledge contradictory data and assign confidence levels based on source quality and consistency.

The strongest conclusions come from multiple, independent sources. The gold standard is when customers, competitors, and former employees all confirm the same insight. A caution flag is when management says one thing and customers say another. A red flag is when you cannot find any independent source to validate a key management claim.

When sources conflict, weight them by proximity to ground truth. A current customer's view on retention risk matters more than an industry analyst's opinion.

Your primary research memo should answer each KIQ with a clear conclusion: validated, invalidated, or inconclusive. Include supporting evidence with source attribution, confidence level and key assumptions, and implications for valuation, deal structure, or investment decision. The memo should be defensible in an IC meeting. If a partner asks how you know something, you should be able to point to specific expert quotes and triangulated data.

"The strongest conclusions come when customers, competitors, and former employees all confirm the same insight."

The compliance layer

Primary research carries legal and reputational risk if you are not careful about information sources and documentation.

You cannot trade on material non-public information obtained through expert calls. Period. MNPI is information that is not publicly available, would likely influence an investor's decision, and came from someone with a duty of confidentiality.

Red flags include current employees discussing non-public financials, board members or executives sharing strategic plans, and suppliers disclosing specific order volumes or contract terms. If an expert starts sharing information that feels too specific or confidential, stop the conversation and consult your compliance team.

Maintain records of expert consent forms and conflict-of-interest disclosures, call recordings and transcripts, payment records and expert profiles, and research memos with source attribution. These records protect you if a regulator questions your information sources or if a deal goes sideways and someone alleges you missed obvious red flags.

During primary research, certain signals should trigger deeper diligence or kill the deal entirely. Customer concentration becomes a red flag when multiple experts mention the same two or three customers as critical to revenue. Pricing pressure becomes a red flag when customers consistently describe the product as commoditised or interchangeable. Churn acceleration becomes a red flag when former employees or customers mention a recent uptick in cancellations. Management credibility becomes a red flag when external experts contradict key claims in the management presentation. Competitive threat becomes a red flag when multiple sources mention a new entrant or technology shift that management has not addressed. Regulatory risk becomes a red flag when industry participants expect new regulations that would materially impact the business model.

Technology as accelerant

AI is changing due diligence workflows, but it has limits.

Increasingly, financial services firms and private equity shops use AI for document due diligence, automating the review of financial statements, legal documents, and contracts. This accelerates the process from weeks to days. But document analysis tells you what the company says. Primary research tells you what customers, competitors, and channel partners actually think. That gap is where most deal failures originate.

AI can help you transcribe and tag interview recordings, identify themes across dozens of expert calls, flag contradictions between expert views and management claims, and generate initial interview guides based on your thesis. AI cannot replace the judgment required to probe unexpected answers. It cannot verify whether an expert is credible or recycled. It cannot triangulate soft signals into investment-grade conclusions. And it cannot take accountability for the quality of the intelligence.

Use AI to eliminate grunt work. Keep the thinking in-house.

"Document analysis tells you what the company says. Primary research tells you what customers, competitors, and channel partners actually think. That gap is where most deal failures originate."

The structural shift

The primary research industry has optimised for the wrong metric. Expert networks and survey platforms are built around call volume and completes, not correct answers. That explains the 50 to 70% margins, recycled expert databases, and pricing models that reward introductions rather than outcomes.

Investment teams are starting to push back. They are asking why they should pay research prices for access, then do all the actual research work themselves: vetting experts, designing surveys, cleaning data, and carrying compliance risk.

The shift is from buying access to buying finished intelligence.

Finished intelligence means you submit a 10-minute brief with your KIQs. The provider recruits fresh, correctly profiled experts. You receive verified, structured outputs ready to drop into IC memos. You only pay for work that genuinely enhances your decision.

This model protects analyst time, cuts the true cost per useful insight, and aligns provider incentives with investment outcomes rather than call volume. When you are holding assets for 8.5 years and running 74% of your deals as add-ons, the cumulative waste from the middleman model shows up in your returns.

The shift from buying access to buying finished intelligence: how the primary research value chain is being restructured.

Closing thoughts

Primary research due diligence is not a checkbox exercise. It separates investment professionals who are precisely right from those who are generally informed.

The methodologies outlined here, tight KIQs, blind interviews, triangulation across source types, and investment-grade verification, are what top-tier deal teams use to validate theses and uncover hidden risks. None of it is complicated. All of it requires discipline.

The 60% of deals that fail due to poor due diligence share a common pattern. They relied on secondary research, management presentations, and surface-level expert calls instead of gathering original intelligence from the right sources.

You can avoid that outcome by treating primary research as a core competency rather than a vendor relationship. When your conviction, sizing, and timing depend on what the market actually thinks and not what the deck says, investment-grade primary research becomes the difference between a write-up and a write-down.